Bridging the Digital and Physical Worlds with Augmented Intelligence

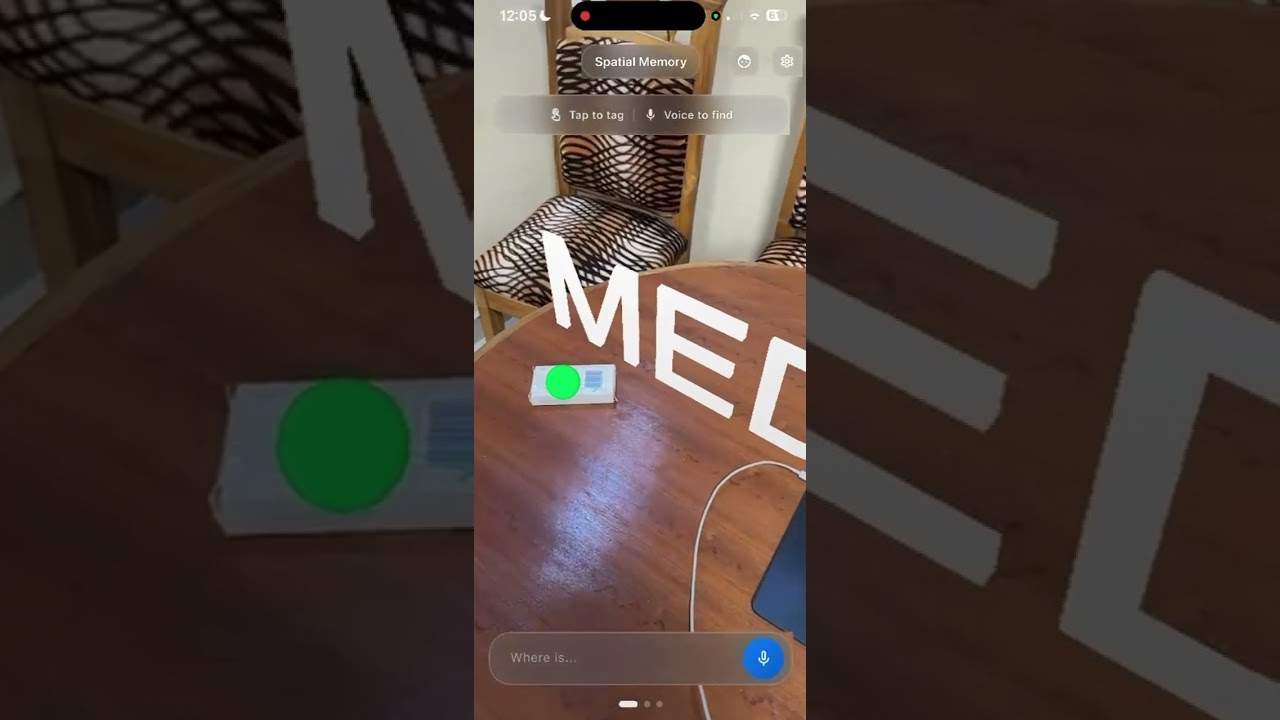

Click the thumbnail to watch the demo on YouTube Shorts

Lucid is an AR-powered intelligence layer that connects your digital world (email, calendar, calls, notes, tasks) with the physical world around you. Using augmented reality, voice commands, and local AI, Lucid helps you understand, interact with, and get contextual information about your environment in real-time.

Lucid serves as an intelligent bridge between two worlds:

- Digital World: Your emails, calendar events, calls, notes, tasks, and integrations

- Physical World: The spaces, objects, and people around you

- Intelligence Layer: Lucid overlays contextual information, answers questions, and helps you navigate both worlds seamlessly

Lucid is designed with smart glasses in mind, where your mobile phone serves as the computing engine. The AR overlay, voice-first interface, and contextual intelligence are all built to transition seamlessly to wearable AR devices.

This simplified version demonstrates the core concept:

- AR Spatial Memory: Tag and recall locations in 3D space using ARKit

- Voice Commands: Natural language interaction for finding and saving spatial memories

- Face Recognition: Recognize and recall information about people you've met

- Contextual Notes: Semantic search through your notes with AI-powered summaries

- Local AI: Privacy-first processing using on-device models (Cactus SDK)

- Real-time Overlays: Glassmorphic UI overlays that blend with your environment

- Flutter: Cross-platform mobile framework

- ARKit: 3D spatial tracking and marker placement

- Cactus SDK: Local AI models for embeddings, text generation, and RAG

- Voice Recognition: Speech-to-text for natural interaction

- RAG (Retrieval Augmented Generation): Semantic search and context retrieval

cd lucid_app

flutter pub get

flutter run- Tag Locations: Tap in AR space to save spatial memories ("Where did I leave my keys?")

- Voice Queries: Ask questions naturally ("Where is my laptop?")

- Contextual Search: Get AI-summarized notes and information relevant to your query

- Face Recognition: Save and recall information about people you meet

- Email, calendar, and task management APIs

- Smart glasses SDK integration

- Enhanced vision understanding

- Multi-modal interactions

- Cross-device synchronization

- iOS (primary)

- Android (planned)

This is a hackathon project demonstrating the core concept. Future development will focus on:

- Expanding digital world integrations

- Smart glasses compatibility

- Enhanced AI capabilities

- Cross-platform support

Built for the Mobile AI Hackathon: Cactus X Nothing X Hugging Face